- Digital Surfer

- Posts

- Google's been talking. You just couldn't hear it.

Google's been talking. You just couldn't hear it.

Other tools show you queries per URL. This shows you queries per topic.

FIRST …

Floyi was built on one idea: your brand and your audience should drive everything.

Not keywords. Not templates. Your ICPs, your positioning, your knowledge base. That context flows from the brand foundation through to topic research, clustering, the map, the briefs and the drafts. One connected system, no context loss between steps.

But the loop had a gap.

You could see rankings. You could track visibility across Google and AI engines. What you couldn't see was which of your topics Google is already sending people to, and where those searches actually belong inside your content strategy.

That's different from pulling a query report. Any tool gives you a URL and the queries driving traffic to it. The old keyword-driven method was to take a query and create an article.

It’s a topic-driven world now.

But take a topic like "agency features for automation platforms." Queries like "what is agency features for automation platforms," "agency features for automation guide" and "agency features review" all belong to that one topic. And they all happen to come from one URL, so there’s no cannibalization occurring with other pages.

In a URL-based view, the list contains scattered queries. You're left guessing whether they belong together and whether multiple pages are competing for the same ground. If you map queries to topics - the picture is immediate.

In this case, every query surfaces the same single page. No cannibalization. Clean. But if three pages were showing up across those queries, you'd see it at the topic level, not buried in rows. You’d need 3 tabs to show each query individually and the pages they show in, along with their clicks, impressions, and position rankings. It’s all in one tab on Floyi.

The Organic Audit maps the Google Search Console queries and URLs back to specific topics in your topical map. You see which silos are earning impressions, where the gaps are and where completing your coverage moves the needle first.

Your topical map now talks back.

Last week, I sent out a 2-minute survey. Thank you to everyone who answered it! The info has been very helpful with helping me steer the surfboard to give you what you want.

If you haven’t had a chance to fill it out yet, please take 1-2 minutes to fill it out, so I can make these weekly emails useful as you grow your businesses.

AD

LLM traffic converts 3× better than Google search

58% of buyers now start their research in ChatGPT or Gemini, not Google. Most startups aren't showing up there yet.

The ones that are get cited by the AI tools their buyers, investors, and future hires already use. And they convert at 3×.

Download the free AEO Playbook for Startups from HubSpot and get the exact steps to start showing up. Five minutes to read.

SEO + GEO

Various reporters at the New York Times reports that Google's AI Overviews now answer standard factual benchmark questions correctly roughly 9 out of 10 times, based on analysis conducted with an AI startup that tracks the feature. That sounds strong at first glance, but at Google's scale even a 10% error rate means a huge absolute volume of wrong answers showing up in search - Google is “processing more than five trillion searches a year, this means that it provides tens of millions of erroneous answers every hour (or hundreds of thousands of inaccuracies every minute)”

My Take: This is the trap with AI accuracy stats. 90% sounds good until you remember how much volume Google handles and how much trust users place in answers that look definitive. Improving from 85% to 91% matters, but the remaining error rate is still big enough to shape reputation, clicks and public understanding at scale.

Ross Simmonds breaks down an analysis of 8,566 keywords where Reddit competes directly with 13 major B2B SaaS domains across four verticals, and the topline result is ugly for most vendors. Reddit reportedly outranks every vendor simultaneously on 50% to 66% of shared keywords in three of the four verticals studied, covering 957,540 monthly searches, and 77% of that winning search volume comes from generic category terms rather than classic review or alternative queries. The study also says Reddit's win rate jumps to 73% to 100% on queries with six or more words, while keywords with CPCs above $50 show a 67.3% Reddit win rate, which means some of the most commercially valuable searches are the ones vendors are losing organically.

My Take: Reddit, Reddit, Reddit! People talk about GEO agencies popping up everywhere, but how about them Reddit agencies. I tested one Reddit service over a year ago and not 1 of the 10+ posts stuck around longer than 2 months 😅

Adam Tanguay explains that backlinks still matter, but AEO authority now depends just as much on repeated mentions, citations and clear entity associations across the web. His pushes teams to create content that is easy for LLMs to extract, with tight definitions, descriptive headers, short paragraphs and explicit context instead of vague references. He also says that the best AEO assets are reference-grade materials like original research, benchmarks, glossaries and visual explainers that journalists, creators and AI systems can reuse.

Molly Nogami and Ben Tannenbaum report that Bing visibility appears to matter more than Google visibility when brands want to show up in ChatGPT recommendations. In their 68-run hotel study, the Baccarat appeared only once, while the Fifth Avenue Hotel showed up 13 times, even though the Baccarat had more reviews, a longer history and similar ratings. Their deeper finding is that winning Google fanout SERPs did not translate into mentions, while stronger placement in Bing fanout results aligned much more closely with ChatGPT visibility.

Danny Goodwin reports that Google says it is aware of weak “best of” listicles and is actively trying to limit that kind of abuse in both Search and Gemini. Through a Lily Ray post, he ties the issue to the FTC's Consumer Review Rule, which bars company-controlled content from being presented as independent reviews and allows penalties that can reach $53,088 per violation. That matters because a lot of “best X” GEO pages are built on thin comparisons, fake scoring systems and self-serving rankings rather than real testing or disclosure.

My Take: This reminds me of the old affiliate site playbook where people would “review” products they barely touched, then get paid to stack certain brands at the top of every best-of article. So in that sense, these listicles are not some new GEO invention. It is the same incentive structure in a new wrapper. The difference now is that Google and regulators both have more reason to treat the pattern as abuse instead of just low-grade publishing. Have you heard of the FTC going after any pay-for-play affiliate sites?

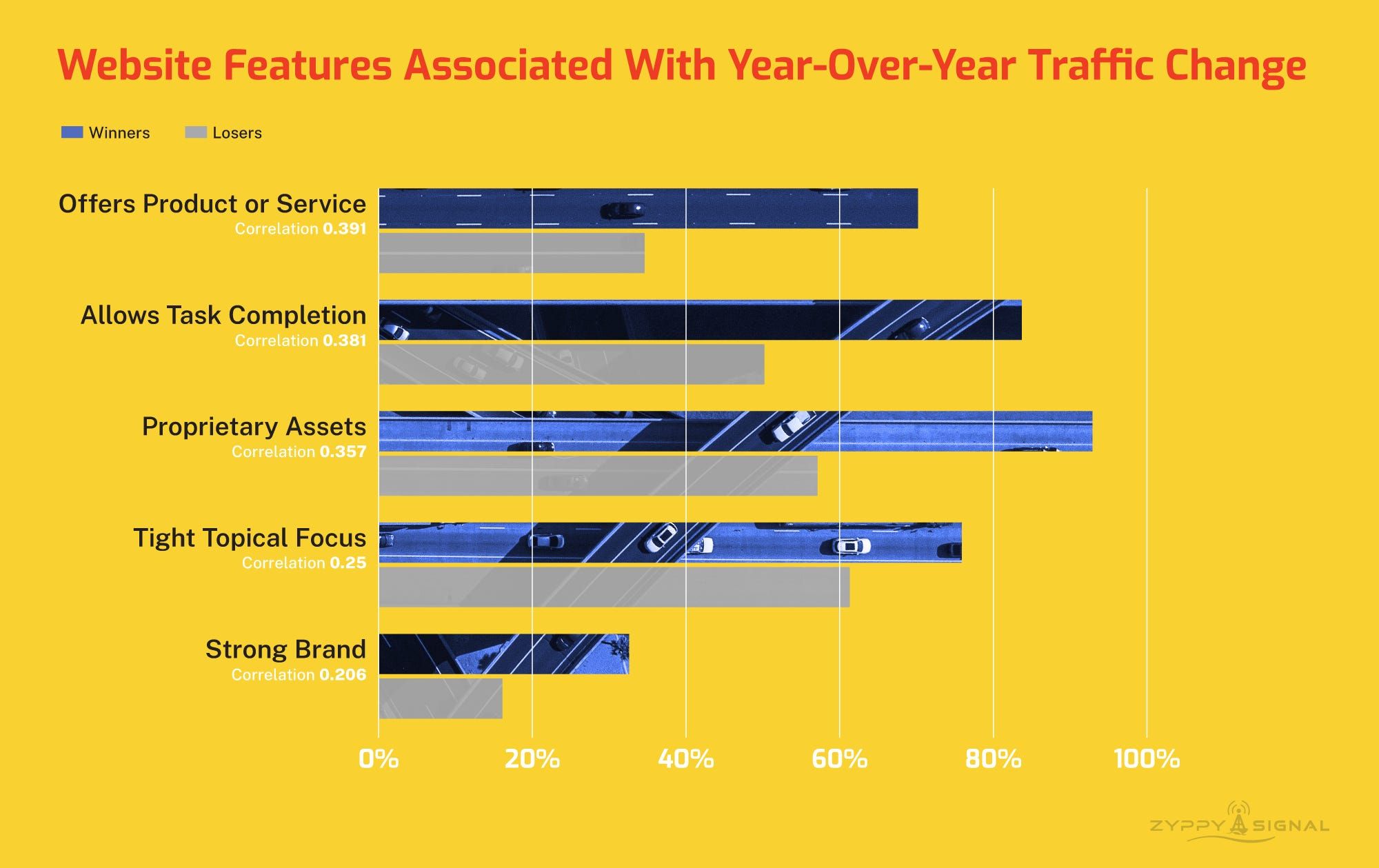

Cyrus Shepard analyzes more than 400 winning and losing websites and finds that the biggest Google winners increasingly share a different business shape from traditional publishers. The strongest pattern is not just better content. It is a stack of business and product traits that are much harder for AI slop content sites to copy.

70.2% of winning sites offered a product or service, compared with 34.6% of losing sites.

83.7% of winners allowed task completion on-site, versus 50.2% of losers.

92.9% of winners had proprietary assets, compared with 57.1% of losers.

75.9% of winners showed tight topical focus, versus 61.3% of losers.

32.6% of winners qualified as strong brands, compared with 16.1% of losers.

Sites with 0 features had a 13.5% win rate.

Sites with 4 features had a 68.1% win rate.

Sites with 5 features had a 69.7% win rate.

SEO + GEO Ripples

John Mueller says Google can handle multiple URLs pointing to the same content and that duplication alone does not trigger a ranking penalty. The real risk is making canonicalization harder on yourself when redirects, internal links and canonical hints send mixed signals.

Sundar Pichai says Google Search is becoming an agent manager that will handle multi-step tasks instead of stopping at links and answers. Google is describing a future where search coordinates work across tools, while Search and Gemini stay separate products that overlap in some use cases.

AI

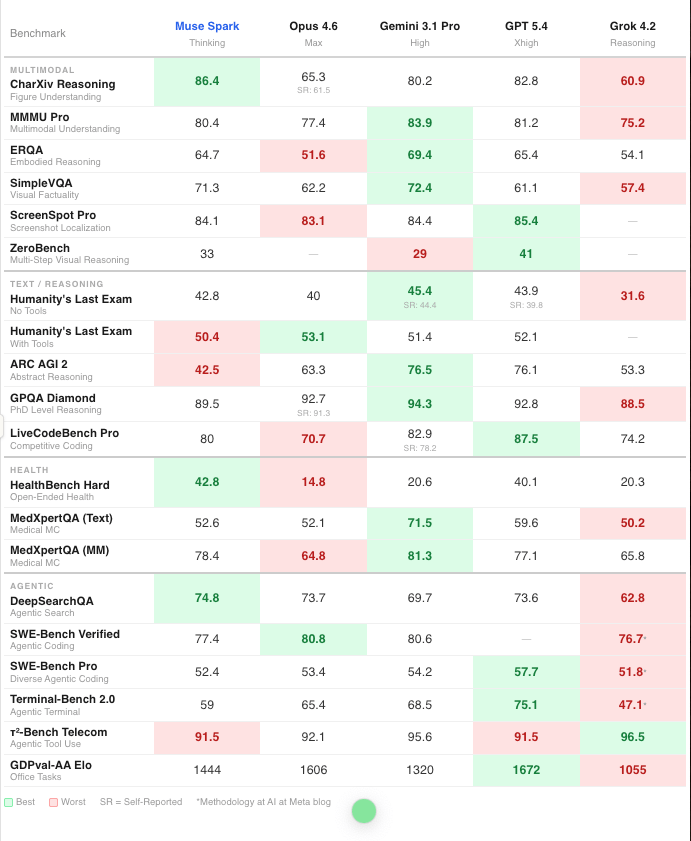

Meta introduces Muse Spark as the first model in its Muse family led by Alexandr Wang. It’s positioned as a natively multimodal reasoning system with tool use, visual chain of thought and multi-agent orchestration. The launch post says Contemplating mode lets parallel agents reason together, pushing Muse Spark to 58% on Humanity's Last Exam and 38% on FrontierScience Research while Meta keeps investing in larger models, long-horizon agents and coding workflows. Meta also claims its rebuilt pretraining stack can reach the same capability level with more than an order of magnitude less compute than Llama 4 Maverick, which is a much bigger signal than the product demo language around personal superintelligence.

Alexandr Wang shared benchmarks on his X account, but it didn’t have highlights on best/worst. Here’s a heatmap version found on X:

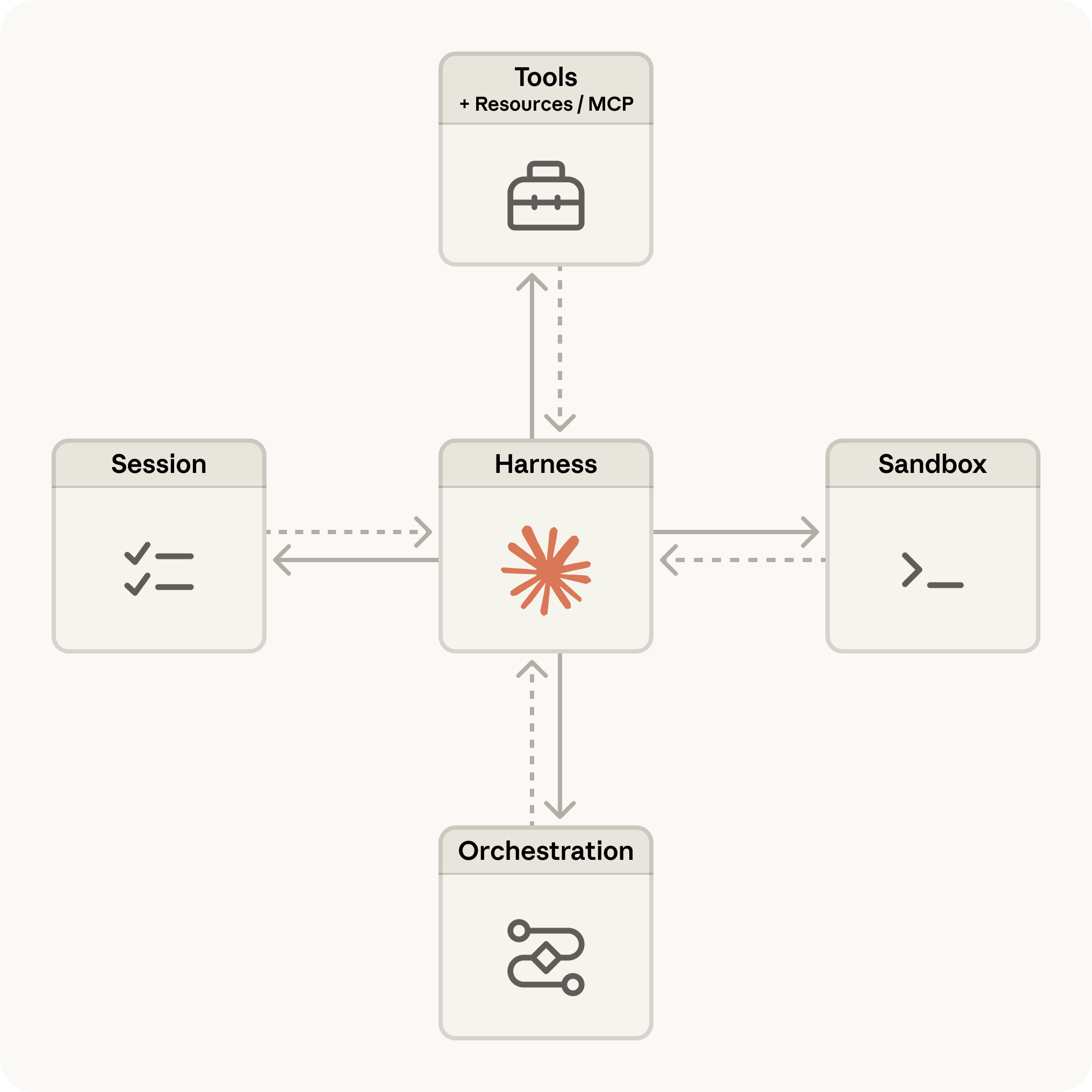

Anthropic launched Claude Managed Agents, a suite of composable APIs for building cloud-hosted agents without having to stand up the usual runtime infrastructure yourself. Anthropic handles sandboxed execution, checkpointing, credential management, scoped permissions, long-running sessions, tracing and agent orchestration, while developers define tasks, tools and guardrails.

Matt Southern reports on Akamai data showing that publishing is absorbing more AI bot traffic than any other media sub-vertical, with OpenAI the largest source, followed by Meta and ByteDance. Akamai says fetcher bots, which retrieve content in real time to answer chatbot questions, may pose a more immediate revenue risk than training crawlers, since they can satisfy user demand without sending people to the publisher's site. Training crawlers made up 63% of AI bot activity targeting media in the second half of 2025, but fetcher bots still accounted for 24% and publishing represented 43% of that fetcher traffic.

My Take: A good reminder that there are two types of AI bots - training and real-time fetchers. Training crawlers are the long-term memory problem, but fetcher bots are the immediate monetization problem because they can replace the click today.

Duncan Lennox argues that most teams think they have a model problem when the real bottleneck is context, meaning the operational knowledge, customer history, process logic and business nuance that AI systems need to produce useful output. He draws a line between raw data and applied context, capturing business context across sales, marketing and service workflows instead of forcing teams to re-brief AI every time. It also breaks context into five layers: business, team, process, customer and network context. Context compounds while generic AI usage does not.

My Take: Models are getting better fast, but the gap between a smart model and a useful system is still the business context wrapped around it. The lack of context is why so many AI workflows are disappointing in practice. The model can respond, but it does not really know how your company works unless that context has been captured somewhere durable.

Context is why the first step in Floyi is solidifying your brand and buyer personas. We don’t let you start creating briefs and drafts like other tools. First step is to build your business context and knowledge base.

Glenn Gabe founds Google testing reusable prompt “Skills” in Chrome Canary for the Gemini side panel, letting users save prompts, name them and call them back later with a slash command. The feature also appears to let Gemini help refine those saved prompts and includes prebuilt options like “Buying advice,” which hints at more guided workflows inside the browser.

My Take: This is a small product change with huge behavior implications. Once people can save repeatable prompt flows inside the browser, they stop treating AI like a blank box and start treating it like a set of shortcuts for real work. I imagine usage increasing fast because the friction of rewriting the same prompt every day disappears.

Anthropic is launching Project Glasswing with partners including AWS, Apple, Cisco, Google, Microsoft and the Linux Foundation to use Claude Mythos Preview for defensive cybersecurity work across critical software. The company says Mythos has already identified thousands of high-severity vulnerabilities, including flaws in every major operating system and web browser, and it highlights examples like a 27-year-old OpenBSD bug, a 16-year-old FFmpeg issue and chained Linux kernel exploits found autonomously. Anthropic is also committing up to $100 million in usage credits and $4 million in donations to open-source security groups while limiting access to a small set of partners and more than 40 additional infrastructure organizations.

My Take: I think this is one of the more serious AI announcements of the year because it is not about demos or consumer features. It is about whether the next jump in model capability makes software defense stronger before it makes software exploitation cheaper. If Anthropic is right about the pace here, then cybersecurity is becoming one of the clearest tests of whether frontier AI labs can roll out dangerous capability responsibly.

AI Ripples

OpenAI launched a new $100 ChatGPT Pro tier, positioning it as the option for longer, higher-effort Codex sessions with 5x more Codex usage than Plus and a temporary bump to as much as 10x usage through May 31. It’s about time OpenAI offered a plan between $20 and $200.

Microsoft's Copilot terms still say the product is for entertainment purposes only, even as the company pushes Copilot hard into enterprise workflows. Microsoft says the wording is legacy language and will be updated. Are you not entertained?

OpenAI added a Prompting Fundamentals page to its Academy. It’s part of a broader set of new Academy docs aimed at helping users get more useful output from AI.

Google added notebooks to Gemini, giving users a way to organize chats, files and custom instructions in one synced workspace that also connects directly with NotebookLM. Google is trying to turn Gemini and NotebookLM into a shared project layer instead of keeping them as separate AI experiences.

Gemini can now generate interactive simulations and models inside chat, letting users explore concepts like orbital physics or molecular structure through sliders and live visualizations instead of static diagrams.

Google published a detailed prompting guide for Lyria 3 Pro, covering structure, vocal control, lyrics, multimodal inputs and workflow tactics for music generation.

SOCIAL MEDIA

YouTube is rolling out avatar tools that let creators generate a digital version of themselves to make videos that look and sound like them, using existing ingredients-to-video systems inside the YouTube mobile app and YouTube Create. The company is positioning this as a safer, more controlled way to use AI likeness tools, with output labeled as AI, avatar use restricted to the creator, and deletion available at any time. The bigger story is that YouTube is moving cloned presence from novelty into native creator workflow, which lowers the barrier to making more content without always being on camera. For social teams and creators, that makes synthetic presence a real publishing tool, not just an experiment on the edge of the platform.

My Take: This is one of those launches that sounds gimmicky until you think about how many creators already struggle to scale filming, reshoots and versioning. If YouTube can make a creator's digital double feel usable without breaking trust, this becomes a serious content multiplier. The hard part will be keeping the line clear between helpful production efficiency and content that starts to feel uncanny or disposable.

Social Ripples

Nikita Bier says X is tightening product rules to curb manipulation and low-quality activity, but many people in the thread are complaining about false positives and overly broad enforcement. That tension is becoming a recurring platform story: every attempt to clean up spam or gaming also risks sweeping up legitimate users who trigger the wrong signals.

WAYS WE CAN WORK TOGETHER

Floyi - Build Topical Authority that wins in Google and AI Search. Don’t just plan your content strategy - make it unstoppable.

TopicalMap.com Service - Let us do the heavy lifting. We handle the research, structure, and strategy. You get a custom topical map designed to boost authority and dominate your niche and industry.

Topical Maps Unlocked 2.0 - Unlock the blueprint to ranking success. Master the art of structuring content that search engines (and your audience) love - and watch your rankings soar.

AD

The AI Playbook for Video Teams That Can't Slow Down

Wistia's new AI Video Marketing Trends report shows how marketers are using AI to move faster, improve quality, and extend the life of every video. See how leading teams are driving results without adding more work.

LIKE DIGITAL SURFER?

Find me and others in the Digital Surfer Discord community.

I’d also love to know what you think and if you have any ideas for the newsletter. Reply or email me at [email protected].

I’d also appreciate it if you shared it with fellow digital surfers.

You currently have 0 referrals, only 3 away from receiving LinkedIn Shout-out.

Have a great week taking your SEO and digital marketing to another level!

And don’t forget to drag the Digital Surfer emails to your Primary Inbox 🌊

Reply